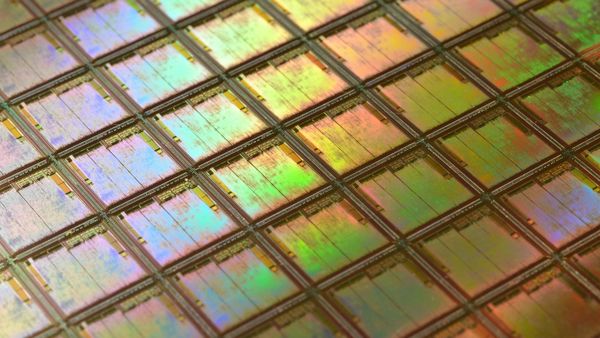

The AI industry has spent the past three years pouring hundreds of billions of dollars into making models bigger. Adaption, a San Francisco startup founded by former Cohere VP of research Sara Hooker and former Cohere director of inference Sudip Roy, has spent that time arguing the industry is solving the wrong problem.

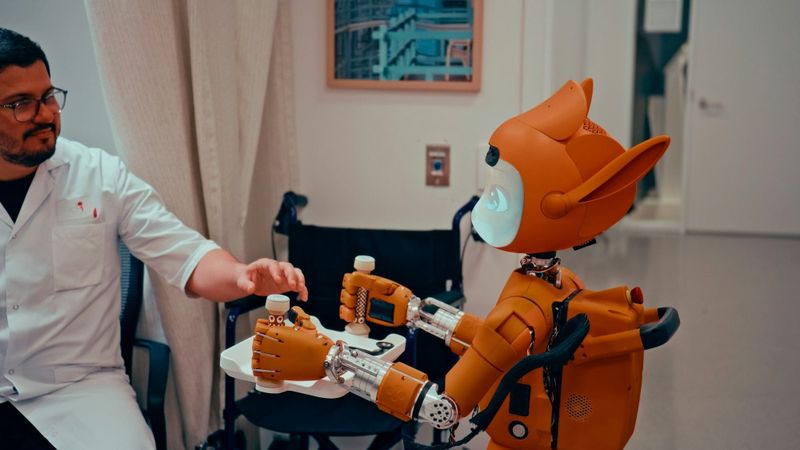

AutoScientist, the product Adaption launched on Tuesday, is designed to automate the process of fine-tuning AI models for specific tasks, a workflow that currently requires teams of specialist machine learning engineers running iterative experiments over weeks or months to optimise both the training data and the model parameters simultaneously.

The tool builds on Adaption's existing Adaptive Data platform, which generates and refines high-quality datasets on the fly, and extends the automation loop to the model itself. Rather than treating data preparation and model training as separate steps managed by different teams, AutoScientist co-optimises both in a single process, automatically discovering the most efficient path to teaching a model a specific capability.

"What's super exciting about it is that it co-optimizes both the data and the model, and learns the best way to basically learn any capability," Hooker told TechCrunch. "It suggests we can finally allow for successful frontier AI trainings outside of these labs."

The last sentence is the important one. Today, the ability to train or substantially fine-tune a frontier-class AI model is restricted to a handful of laboratories, OpenAI, Anthropic, Google DeepMind, Meta and a small number of well-funded startups, because the process requires not only computing power but deep expertise in data curation, training dynamics and hyperparameter optimisation. If AutoScientist can meaningfully automate that expertise, it would lower the barrier to entry for organisations that have domain-specific data and use cases but lack the machine learning research teams to exploit them.

Adaption claims AutoScientist has more than doubled win-rates across different models in internal testing. The company acknowledges that conventional benchmarks do not capture what the system does, because it adapts models to specific tasks rather than optimising for generic performance metrics.

Hooker's intellectual pedigree gives the claims more weight than a typical startup product launch would warrant. Before co-founding Adaption, she spent five years as a research scientist at Google DeepMind and led Cohere's research division. Her widely cited 2020 paper, "The Hardware Lottery," argued that ideas in AI succeed or fail based on whether they fit existing hardware rather than their inherent merit, an argument that anticipated much of the current debate about whether scaling alone can deliver artificial general intelligence.

Related reading

- Five things you didn't know about ChatGPT 5.5, including the one stat OpenAI doesn't want you to see

- OpenAI is outmanoeuvring Anthropic on cyber diplomacy, and Europe is the prize

- OpenAI's rush to match Anthropic's Mythos exposes a new front in the AI arms race, and a growing policy vacuum

Adaption raised $50 million in seed funding in February from Emergence Capital Partners, Mozilla Ventures, Fifty Years and others, and has not disclosed its valuation. It sits within a growing cohort of "neolabs" founded by senior researchers who have left the established AI companies to pursue architectures and training methods that challenge the scaling orthodoxy.

AutoScientist is available with a 30-day free trial. Whether it delivers on the promise of democratising frontier-quality fine-tuning will depend on how it performs outside Adaption's own testing environment, in the hands of enterprise customers with messy data, specific requirements and limited machine learning expertise. If it works, it could do for model training what cloud computing did for infrastructure: turn a capability that once required dedicated teams and specialised knowledge into a service anyone can use.The recap

- Adaption launched AutoScientist to automate model fine-tuning processes.

- AutoScientist reportedly more than doubled win-rates across models.

- Tool is free to use for the first 30 days.