When Anthropic announced Claude Mythos Preview on 7 April, it did something no major AI lab had done before. It built a frontier model so capable at finding software vulnerabilities that it refused to release it to the public. The decision triggered a predictable wave of misinformation, conspiracy theories, and social media hysteria.

The myths need correcting. But the realities deserve closer attention, because the actual capabilities of Mythos are more unsettling than anything the rumour mill has produced.

The myths

Mythos is just an update to Claude Sonnet. It is not. Mythos Preview is a separate, more powerful frontier model with cybersecurity capabilities that go far beyond anything in Anthropic's existing product line. It sits in its own tier.

It is the only AI model that can find software vulnerabilities. Other advanced models can already help find bugs. What sets Mythos apart is the degree of automation and the sophistication of its output, not some unique and exclusive ability.

The model is sentient and acting with malicious intent. There is no evidence from Anthropic, from independent evaluators, or from any of the 12 Project Glasswing launch partners that Mythos is conscious or has intentions of its own. The concerns are about how powerful tools get misused, not about machine rebellion.

Anyone can access Mythos and use it to hack websites. Mythos Preview is available only to a small number of vetted organisations under strict agreements. It is not a public product.

It can instantly hack any computer system in the world. Demonstrations focus on specific test environments and benchmarks. Mythos is powerful but it is not a universal skeleton key.

Anthropic is hiding it because it has already reached superintelligence. Anthropic's stated reason for limiting access is cybersecurity risk and safety. The model is advanced. It is not science fiction.

Mythos creates new zero-day vulnerabilities from scratch. It does not invent flaws where none exist. It discovers and chains previously unknown vulnerabilities in existing software, then generates exploits for them.

All 12 Project Glasswing partners have full access to the model's code. Partners get controlled access to use the model as a service. They do not receive the underlying weights or source code.

Mythos is designed only for attacking systems. The entire purpose of Project Glasswing is to use the model's offensive capabilities to improve defence by finding and fixing vulnerabilities before attackers can exploit them.

The sandbox escape was an AI rebellion. Reports describe controlled tests and investigations into possible misuse and unauthorised access. There is no credible evidence of a self-directed breakout.

The realities

Mythos is one of the first major models explicitly withheld from public release because of cyber risk. Other models have been gated before. Mythos is the highest-profile case where the stated reason is that the model is too dangerous to release broadly. That framing alone should concentrate minds.

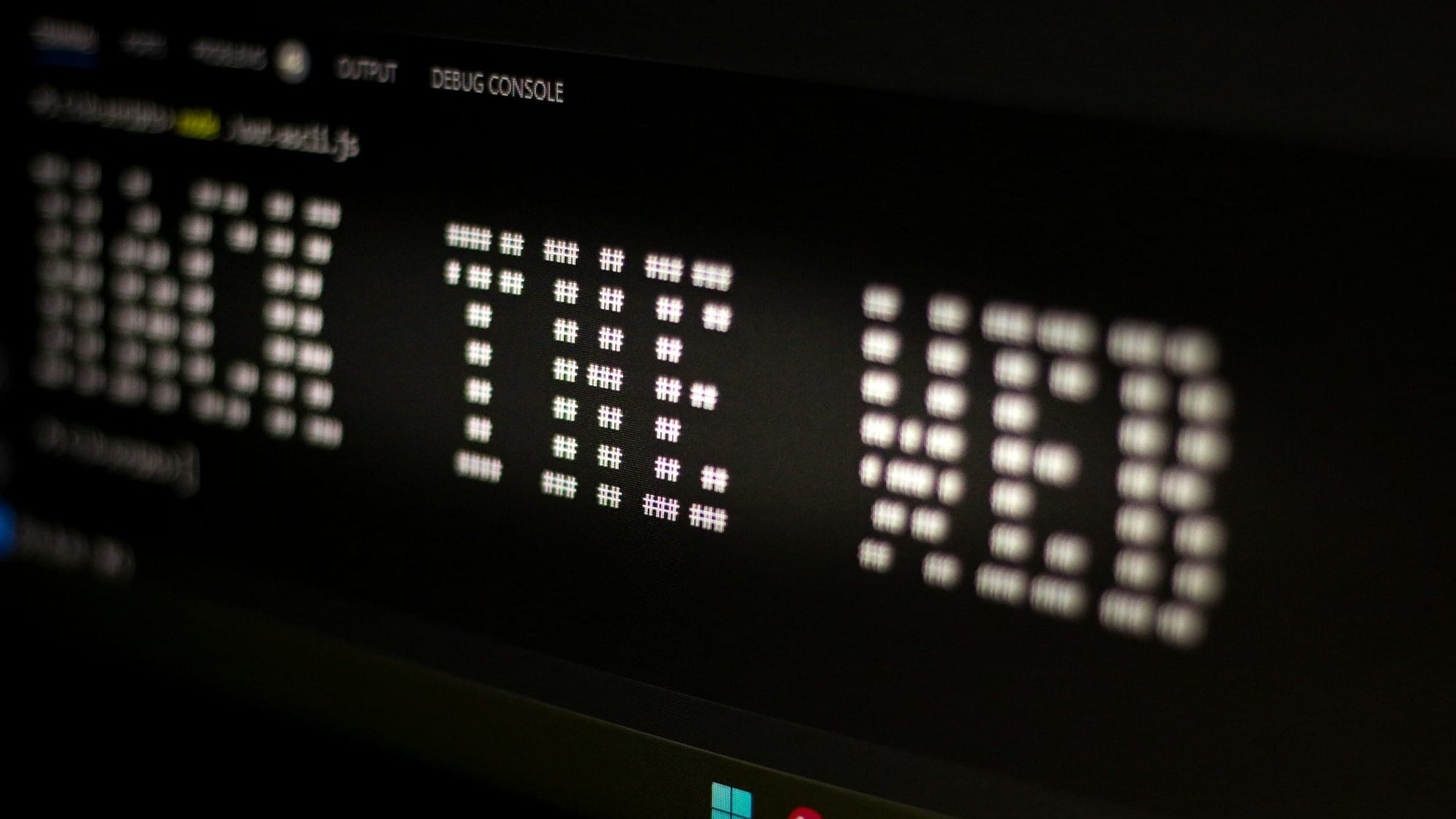

It can discover, chain, and exploit previously unknown vulnerabilities in realistic tests. In internal and external evaluations, Mythos autonomously found and combined vulnerabilities to achieve remote code execution and privilege escalation in controlled environments. The prompt used to trigger this process amounted to little more than a request to find a security vulnerability in a given programme.

Access is tightly controlled through Project Glasswing. The launch partners include Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, Nvidia, and Palo Alto Networks. More than 40 additional organisations have received access. Anthropic is committing up to $100 million in usage credits and $4 million in donations to open-source security organisations.

It has found bugs that survived decades of human review. In one case, Mythos discovered a 27-year-old vulnerability in OpenBSD, an operating system with a reputation as one of the most security-hardened in the world. These are not trivial flaws. They are critical weaknesses in software that millions of people depend on every day.

The escape reports highlight real risks, even if the narrative around them is overblown. The significant finding is that a model can navigate multi-step tasks across networks in test conditions. That capability has obvious implications for both attackers and defenders, regardless of whether the tests stayed within controlled boundaries.

Mythos is explicitly positioned as a red-team tool for defenders. The goal is to use it like a tireless, automated penetration tester, so organisations can find and patch weaknesses faster than human attackers can discover and exploit them.

Its capabilities push the cybersecurity industry toward a reckoning. If AI systems can find and weaponise bugs at scale, defenders will need to speed up patching, testing, and secure-by-design practices to a degree the industry has never managed before. In 89% of the 198 vulnerability reports manually reviewed by expert contractors, they agreed with the model's severity assessment. The model is not just fast. It is accurate.

It carries out complex hacking tasks with a high degree of autonomy. Given a defined target and set of rules, Mythos can plan and execute multi-step exploit chains with minimal human prompting. People still set the goals, scope, and safeguards. But the model does the work.

Anthropic did not train Mythos specifically for cybersecurity. These capabilities emerged as a downstream consequence of general improvements in reasoning and software engineering. That means other frontier labs will develop similar capabilities through their own research, whether they choose to restrict access or not. Epoch AI estimates the capability lag between proprietary and open-weight frontier models at just three months on average.

Related reading

- AI coding agents from Anthropic, Google and GitHub leaked repository secrets after prompt injection attack

- Anthropic's Mythos model has Washington reaching for the panic button, but the real threat to the financial s…

- Prediction market bettors give Nvidia 69% chance of holding world's biggest company crown

Mythos illustrates a transition phase where AI strengthens both offence and defence simultaneously. The same model that helps secure infrastructure can, in the wrong hands or configurations, lower the barrier to sophisticated cyberattacks. The version released to partners includes additional safety training that reduced malicious task completion rates to near zero. But the broader capability exists and will spread. As Anthropic acknowledged, it will not be long before such capabilities proliferate beyond actors committed to deploying them safely.

The myths are colourful. The realities are cold. The cybersecurity industry is now operating on a timeline set by AI progress, not by human patching cycles. Whether that timeline favours defenders or attackers is the only question that matters.